When Facebook released GraphQL in 2015 they released two separate things; a specification and a working implementation of the specification in JavaScript called GraphQL.js.

GraphQL.js acts as a “reference implementation” for people implementing GraphQL in other languages but it’s also a polished production-worthy JavaScript library at the heart of the JavaScript GraphQL ecosystem.

GraphQL.js gives us, among other things, the graphql function, which is what does the work of turning a query into a response.

graphql(schema, `{ hello }`)

{

"data": {

"hello": "world"

}

}

The graphql function above is taking two arguments, one is the { hello } query, the other, the schema could use a little explanation.

The Schema

In GraphQL you define types for all the things you want to make available.

GraphQL.org has a simple example schema written in Schema Definition Language.

type Book {

title: String

author: Author

price: Float

}

type Author {

name: String

books: [Book]

}

type Query {

books: [Book]

authors: [Author]

}

There are a few types we defined (Book, Author, Query) and some that GraphQL already knew about (String, Float). All of those types are collectively referred to as our schema.

You can define your schema with Schema Definition Language (SDL) as above or, as we will do, use plain JavaScript. It’s up to you. For our little adventure today I’ll use JavaScript and define a single field called “hello” on the mandatory root Query type.

var { GraphQLObjectType, GraphQLString, GraphQLSchema } = require('graphql')

var query = new GraphQLObjectType({

name: 'Query',

fields: {

hello: {type: GraphQLString, resolve: () => 'world'}

}

})

var schema = new GraphQLSchema({ query })

The queries we receive are written in the GraphQL language, which will be checked against the types and fields we defined in our schema. In the schema above we’ve defined a single field on the Query type, and mapped a function that returns the string ‘world’ to that field.

GraphQL is a language like JavaScript or Python but the inner workings of other languages aren’t usually as visible or approachable as GraphQL.js make them. Looking at how GraphQL works can tell us a lot about how to use it well.

The life of a GraphQL query

Going from a query like { hello } to a JSON response happens in four phases:

- Lexing

- Parsing

- Validation

- Execution

Let’s take that little { hello } query and see what running it through that function looks like.

Lexing: turning strings into tokens

The query { hello } is a string of characters that presumably make up a valid query in the GraphQL language. The first step in the process is splitting that string into tokens. This work is done with a lexer.

var {createLexer, Source} = require('graphql/language')

var lexer = createLexer(new Source(`{ hello }`))

The lexer can tell us the current token, and we can advance the lexer to the next token by calling lexer.advance()

lexer.token

Tok {

kind: '',

start: 0,

end: 0,

line: 0,

column: 0,

value: undefined,

prev: null,

next: null }

lexer.advance()

Tok {

kind: '{',

start: 0,

end: 1,

line: 1,

column: 1,

value: undefined,

next: null }

lexer.advance()

Tok {

kind: 'Name',

start: 1,

end: 6,

line: 1,

column: 2,

value: 'hello',

next: null }

lexer.advance()

Tok {

kind: '}',

start: 6,

end: 7,

line: 1,

column: 7,

value: undefined,

next: null }

lexer.advance()

Tok {

kind: '',

start: 7,

end: 7,

line: 1,

column: 8,

value: undefined,

next: null }

It’s important to note that we are advancing by token not by character. Characters like commas, spaces, and new lines are all allowed in GraphQL since they make code nice to read, but the lexer will skip right past them in search of the next meaningful token.

These two queries will produce the same tokens you see above.

createLexer(new Source(`{ hello }`))

createLexer(new Source(` ,,,\r\n{,\n,,hello,\n,},,\t,\r`))

The lexer also represents the first pass of input validation that GraphQL provides. Invalid characters are rejected by the lexer.

createLexer(new Source("*&^%$")).advance()

Syntax Error: Cannot parse the unexpected character "*"

Parsing: turning tokens into nodes, and nodes into trees

Parsing is about using tokens to build higher level objects called nodes.

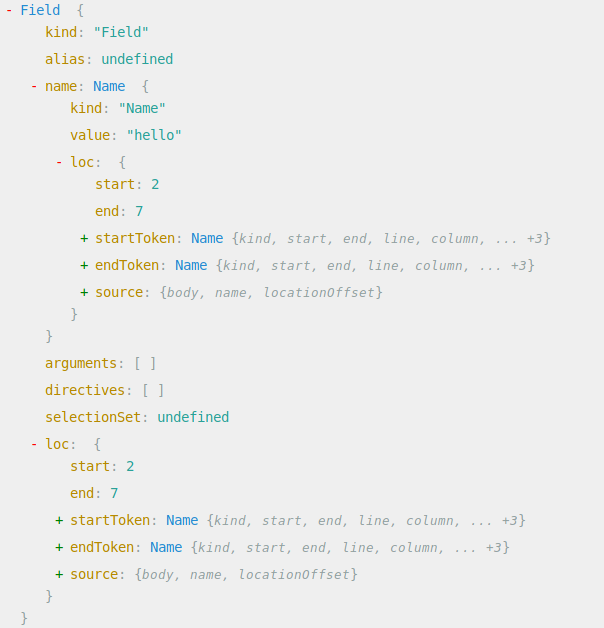

If you look you can see the tokens in there but nodes have more going on.

If you use a tool like grep or ripgrep to search through the source of GraphQL.js you will see where these nodes are coming from. There specialised parsing functions for each type of node, the majority of which are used internally by the parse function. These functions follow the pattern of accepting a lexer, and returning a node.

$ rg "function parse" src/language/parser.js

124:export function parse(

146:export function parseValue(

168:export function parseType(

183:function parseName(lexer: Lexer): NameNode {

197:function parseDocument(lexer: Lexer): DocumentNode {

212:function parseDefinition(lexer: Lexer): DefinitionNode {

246:function parseExecutableDefinition(lexer: Lexer): ExecutableDefinitionNode {

271:function parseOperationDefinition(lexer: Lexer): OperationDefinitionNode {

303:function parseOperationType(lexer: Lexer): OperationTypeNode

Using the parse function is a simple as passing it a GraphQL string. If we print the output of parse with some spacing we can see the what’s actually happening: it’s constructing a tree. Specifically, it’s an Abstract Syntax Tree (AST).

> var { parse } = require('graphql/language')

> console.log(JSON.stringify(parse("{hello}"), null, 2))

{

"kind": "Document",

"definitions": [

{

"kind": "OperationDefinition",

"operation": "query",

"variableDefinitions": [],

"directives": [],

"selectionSet": {

"kind": "SelectionSet",

"selections": [

{

"kind": "Field",

"name": {

"kind": "Name",

"value": "hello",

"loc": {

"start": 1,

"end": 6

}

},

"arguments": [],

"directives": [],

"loc": {

"start": 1,

"end": 6

}

}

],

"loc": {

"start": 0,

"end": 7

}

},

"loc": {

"start": 0,

"end": 7

}

}

],

"loc": {

"start": 0,

"end": 7

}

}

If you play with this, or a more deeply nested query you can see a patten emerge. You’ll see SelectionSets containing selections containing SelectionSets. With a structure like this, a function that calls itself would be able to walk it’s way down the this entire object. We’re all set up for some recursive evaluation.

Validation: Walking the tree with visitors

The reason for an AST is to enable us to do some processing, which is exactly what happens in the validation step. Here we are looking to make some decisions about the tree and how well it lines up with our schema.

For any of that to happen, we need a way to walk the tree and examine the nodes. For that there is a pattern called the Vistor pattern, which GraphQL.js provides an implementation of.

To use it we require the visit function and make a visitor.

var { visit } = require('graphql')

var depth = 0

var vistor = {

enter: node => {

depth++

console.log(' '.repeat(depth).concat(node.kind))

return node

},

leave: node => {

depth--

return node

},

}

Our vistor above has enter and leave functions attached to it. These names are significant since the visit function looks for them when it comes across a new node in the tree or moves on to the next node.

The visit function accepts an AST and a visitor and you can see our visitor at work printing out the kind of the nodes being encountered.

> visit(parse(`{ hello }`, visitor)

Document

OperationDefinition

SelectionSet

Field

Name

With the visit function providing a generic ability to traverse the tree, the next step is to use this ability to determine if this query is acceptable to us.

This happens with the validate function. By default, it seems to know that kittens are not a part of our schema.

var { validate } = require('graphql')

validate(schema, parse(`{ kittens }`))

// GraphQLError: Cannot query field "kittens" on type "Query"

The reason it knows that is that there is a third argument to the validate function. Left undefined, it defaults to an array of rules exported from ‘graphql/validation’. These “specifiedRules” are responsible for all the validations that ensure our query is safe to run.

> var { validate } = require('graphql')

> var { specifiedRules } = require('graphql/validation')

> specifiedRules

[ [Function: ExecutableDefinitions],

[Function: UniqueOperationNames],

[Function: LoneAnonymousOperation],

[Function: SingleFieldSubscriptions],

[Function: KnownTypeNames],

[Function: FragmentsOnCompositeTypes],

[Function: VariablesAreInputTypes],

[Function: ScalarLeafs],

[Function: FieldsOnCorrectType],

[Function: UniqueFragmentNames],

[Function: KnownFragmentNames],

[Function: NoUnusedFragments],

[Function: PossibleFragmentSpreads],

[Function: NoFragmentCycles],

[Function: UniqueVariableNames],

[Function: NoUndefinedVariables],

[Function: NoUnusedVariables],

[Function: KnownDirectives],

[Function: UniqueDirectivesPerLocation],

[Function: KnownArgumentNames],

[Function: UniqueArgumentNames],

[Function: ValuesOfCorrectType],

[Function: ProvidedRequiredArguments],

[Function: VariablesInAllowedPosition],

[Function: OverlappingFieldsCanBeMerged],

[Function: UniqueInputFieldNames] ]

validate(schema, parse(`{ kittens }`), specifiedRules)

// GraphQLError: Cannot query field "kittens" on type "Query"

In there you can see checks to ensure that the query only includes known types (KnownTypeNames) and things like variables having unique names (UniqueVariableNames).

This is the next level of input validation that GraphQL provides.

Rules are just visitors

If you dig into those rules (all in src/validation/rules/) you will realize that these are all just visitors.

In our first experiment with visitors, we just printed out the node kind. If we look at this again, we can see that even our tiny little query ends up with 5 levels of depth.

visit(parse(`{ hello }`, visitor)

Document // 1

OperationDefinition // 2

SelectionSet // 3

Field // 4

Name // 5

Let’s say for the sake of experimentation that 4 is all we will accept. To do that we’ll write ourselves a visitor, and then pass it into the third argument to validate.

var fourDeep = context => {

var depth = 0, maxDepth = 4 // 😈

return {

enter: node => {

depth++

if (depth > maxDepth)

context.reportError(new GraphQLError('💥', [node]))

}

return node

},

leave: node => { depth--; return node },

}

}

validate(schema, parse(`{ hello }`), [fourDeep])

// GraphQLError: 💥

If you are building a GraphQL API server, you can take a rule like this and pass it as one of the options to express-graphql, so your rule will be applied to all queries the server handles.

Execution: run resolvers. catch errors.

This us to the execution step. There isn’t much exported from ‘graphql/execution’. What’s worthy of note is here is the root object, and the defaultFieldResolver. This work in concert to ensure that wherever there isn’t a resolver function, by default you get the value for that fieldname on the root object.

var { execute, defaultFieldResolver } = require('graphql/execution')

var args = {

schema,

document: parse(`{ hello }`),

// value 0 in the "value of the previous resolver" chain

rootValue: {},

variableValues: {},

operationName: '',

fieldResolver: defaultFieldResolver,

}

execute(args)

{

"data": {

"hello": "world"

}

}

Why all that matters

For me the take-away in all this is a deeper appreciation of what GraphQL being a language implies.

First, giving your users a language is empowering them to ask for what they need. This is actually written directly into the spec:

GraphQL is unapologetically driven by the requirements of views and the front‐end engineers that write them. GraphQL starts with their way of thinking and requirements and builds the language and runtime necessary to enable that.

Empowering your users is always a good idea but server resources are finite, so you’ll need to think about putting limits somewhere. The fact that language evaluation is recursive means the amount of recursion and work your server is doing is determined by the person who writes the query. Knowing the mechanism to set limits on that (validation rules!) is an important security consideration.

That caution comes alongside a big security win. Formal languages and type systems are the most powerful tools we have for input validation. Rigorous input validation is one of the most powerful things we can do to increase the security of our systems. Making good use of the type system means that your code should never be run on bad inputs.

It’s because GraphQL is a language that it let’s us both empower users and increase security, and that is a rare combination indeed.